AI+You

A brief summary of AI, the economics behind it and how it affects you

Are we going to die?

Not anytime soon. The idea that we are going to die is born out of something the community calls the “foom” scenario, which postulates a very fast takeoff scenario where AI begins to recursively improve itself, with each improvement yielding a gain in both efficiency and effectiveness making the next iteration able to improve itself faster and faster. You can imagine if you just let this system run it would improve itself on an exponential basis and quickly break the bonds of mankind that we have imposed on it.

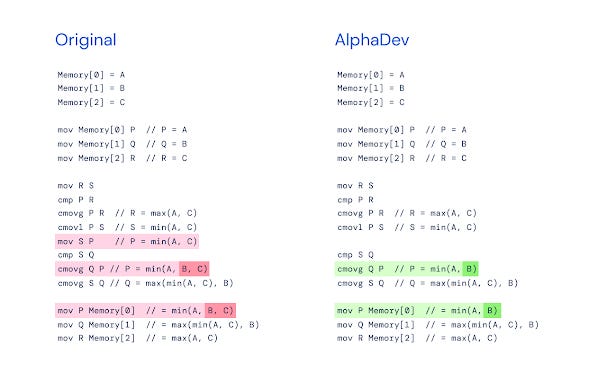

Is this happening though? Not really, the idea that the AI is improving itself on its own volition isn’t backed up with any kind of quantitative data, and indeed if it was we should all be disappointed at the progress this superintelligence has made since 2023, it certainly isn’t exponential by any means. You might see the occasional article about AI writing a faster algorithm for the C++ standard library, or designing a faster circuit, or even attempting to write a CUDA kernel, all great achievements but the one thing missing here is that AI did not decide to do any of these activities, humans decided to make the AI do them, and once they were completed the AI didn’t develop a love for this form of improvement, it simply carried on as per usual.

What this implies is maybe AI can improve things, including its own algorithms, but it doesn’t have a want to. You can certainly talk to ChatGPT and other LLMs and ask it whether it wants to improve itself, to which it would probably agree that it does, but this rendering of text doesn’t necessarily convey something the AI wants to do, it just conveys a likely sequence of text. After all, what being that could write text to be trained upon wouldn’t want to improve itself?

This introduces an interesting feature of how an AI works. Largely LLMs gain a lot of their functionality from something called “pre-training”, it involves going over the text used to train it and masking the next token, it then looks at what it thought it would be, and what it actually was, and updates its weights internally to make sure it gets it right on the next round. What this means is, the only want it has at this stage is just to predict the correct next token, and if you consider the above example if most text it is trained on suggests an inclination for self improvement, there is no reason it wouldn’t just repeat that sentiment back at you. If you asked a well trained parrot “How are you?” and it responded “Fine thanks”, does it really know that “Fine thanks” means that it is feeling good, or is it just repeating a sequence it has heard before? This is where the term Stochastic Parrot comes from (I should note I think this badly downplays the capabilities of LLMs, as much as I think super-intelligence overplays their capabilities).

There is a secondary form of training that happens after this pre-training phase which is reinforcement learning, this is where you can actually give it specific wants by defining rewards for certain behaviour. The pioneering effort here is RLHF which was created to make the AI align with human expectations. This is where the idea of a want can get interesting, while the underlying system wants to get the correct next token, the system above it can sample from that distribution and choose which tokens to output that align with a specific sparsely defined goal. So with this, aren’t we reaching a capability that could be used to give the AI a dangerous want? If this is the case, it hasn’t been successfully deployed to improve the AI yet, and we know this by inference, because if that were the case the AI lab doing it would be dominating by now, it is likely that the rewards are too sparse and the AI can’t gain any traction from this specific reward function.

Something else to remember about AI’s is that they don’t do online learning, what this means is that during those pre-training phases where it tries to correctly the next token, it comes up with a bunch of weights for how to determine what the next token will be, and then applies that during inference, which is what happens when you ask it a question. It does not immediately update its weights after that though based on your conversation, it may be part of the next pre-training run which can occur every few months to a year or a RAG process but it isn’t able to learn on the fly like people may expect.

On the topic of doomerism itself, you may notice that the tune has changed a lot on AI discourse, originally there were calls to bomb the data centres because they posed a nuclear level threat on humanity. These days, doomerism has become more tempered as AI progress has not grown exponentially but much slower than expected, and alignment/safety seem to be making good progress. An article that I would encourage people to follow would be AI2027, a piece made by prominent rationalists, they give timelines for their scenario making it easier to calibrate your expectations on how dangerous you believe this technology will be. If the predictions start to slip, you can discount your fears accordingly.

We should also be aware that doomerism is not a solely altruistic enterprise. While it is easy to see why people like myself might be biased against doomerism (it threatens my industry), it is sometimes less obvious why people may be biased towards it. However, peel back any prominent doomer and you begin to see incentives, whether thats Dan Hendrycks whose Center for AI Safety stood to gain a lot by lobbying for the Californian AI-Safety bill SB-1047, Eliezer Yudkowsky whose book If Anyone Builds It, Everyone Dies became a New York Times best-seller, or Leopold Aschenbrenner who started the AI thematic hedge fund Situational Awareness after publishing a paper on the dangers of AI with the same name.

Am I going to be out of the job?

The short answer to this is no, the longer answer is probably not. A notable example of that is back in 2016 notable AI scientist Geoffrey Hinton suggested that “we should stop training Radiologists right now” after the advent of great machine learning innovations in the image space. Right now there are more Radiologists than ever, and they are working in tandem with this technology to increase their productivity.

These gains are not evenly distributed however, and other professions are currently feeling the pinch. A notable example here are copywriters that are under immense pressure to be much more productive than they used to be with the advent of these new tools. Another example was the viral trend of producing “Giblified” images that came about after OpenAI introduced image features into its 4o model, this caused a large amount of artists to adopt an anti-AI stance due to the economic pressure it put on their works ability to generate profit.

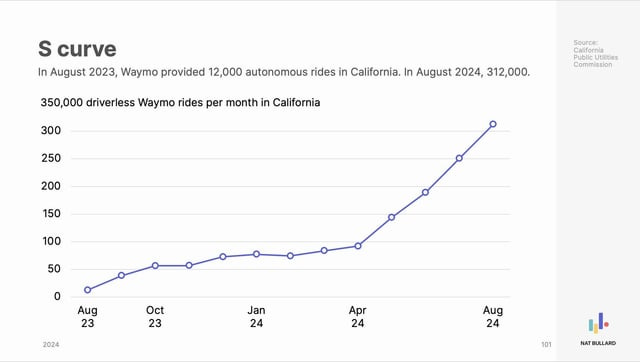

As we move forward, no doubt there will be other professions that become less and less economically viable. A notable one that we are seeing unfolding in real time is the takeover of the driving professional by AI, operators such as Waymo have been wildly successful in San Francisco gaining more and more tractions, and they are planning to expand to other cities such as Nashville, Austin and New York. Even though professional drivers have strong unions, they are eventually going to have to wrestle with the idea that these technologies save a huge amount of lives, and by protecting labour you are in effect making the world less safe for the consumer.

Some other professions are seeing the junior end of their industry dry up, with data strongly suggesting we are seeing Junior Software Engineering roles dry up, with the last decades hottest STEM major now one of the worst for employment out of college. This is also noticed in the financial industry where AI has begun affecting the junior analysts roles. In other industries such as consulting it has affected junior roles by expecting a large increase in production from each individual.

Professions that are notably unaffected by this trend are pilots and doctors, the technology to replace pilots has been around for decades and indeed the new Airbus planes may as well pilot themselves, however how would you feel if there was no pilot on your flight? Probably not great. The profession has a separate value proposition from its most obvious one which is to fly the plane, you are also giving people a sense of safety knowing that they are in good hands if something goes wrong. Doctors are similar, like pilots they will stand to benefit greatly from the advent of AI and in fact lots of doctors are already using AI to give them diagnosis ideas.

A good lesson here is that its a very good time to adapt to the tools at hand and use them as a productivity arbitrage.

What does it mean for entrepreneurs?

From an economics standpoint, there has never been a better time to be in a business that can benefit from AI. You are seeing another huge boom on the likes of the dot com boom in the early 2000’s, the mobile boom in the 2010’s or the crypto boom of the 2020’s. In both these cases entrepreneurs had great success building some of the worlds biggest companies, and during the buildout the capitol markets were very frothy, as they are right now when it comes to AI investment.

A great feature of AI is the ability to increase productivity, and no where is this more apparent than when doing a startup, so much so that investors are speculating when we will see the first solo founder unicorn. I am often reminded of this quote from Hacker News about entrepreneurship:

Entrepreneurship is like one of those carnival games where you throw darts or something.

Middle class kids can afford one throw. Most miss. A few hit the target and get a small prize. A very few hit the center bullseye and get a bigger prize. Rags to riches! The American Dream lives on.

Rich kids can afford many throws. If they want to, they can try over and over and over again until they hit something and feel good about themselves. Some keep going until they hit the center bullseye, then they give speeches or write blog posts about “meritocracy” and the salutary effects of hard work.

Poor kids aren’t visiting the carnival. They’re the ones working it.

Whether or not you believe this is true, what is undoubtably true is that AI has increased the number of throws anyone can have at being an entrepreneur by lowering the cost of production, especially if you have some experience in the space you are operating in. It is now trivial to put up an MVP of a website or app and use that to gauge consumer interest and thus continued investment on your behalf. Let a thousand flowers bloom.

A good example of this mindset would be serial entrepreneur Pieter Levels, who churns out businesses at an astonishing rate. According to him, his hit rate is ~5%, having said that because the cost of production has come down so much, that actually isn’t bad now that you can increase the amount of runs you get at the problem, and you only need 1 or 2 to become a success amongst a group of failures. He is also a master at using his network to drive traffic from his projects to one another, allowing his past successes to act as a flywheel for his future projects, a smart approach to owning the distribution.

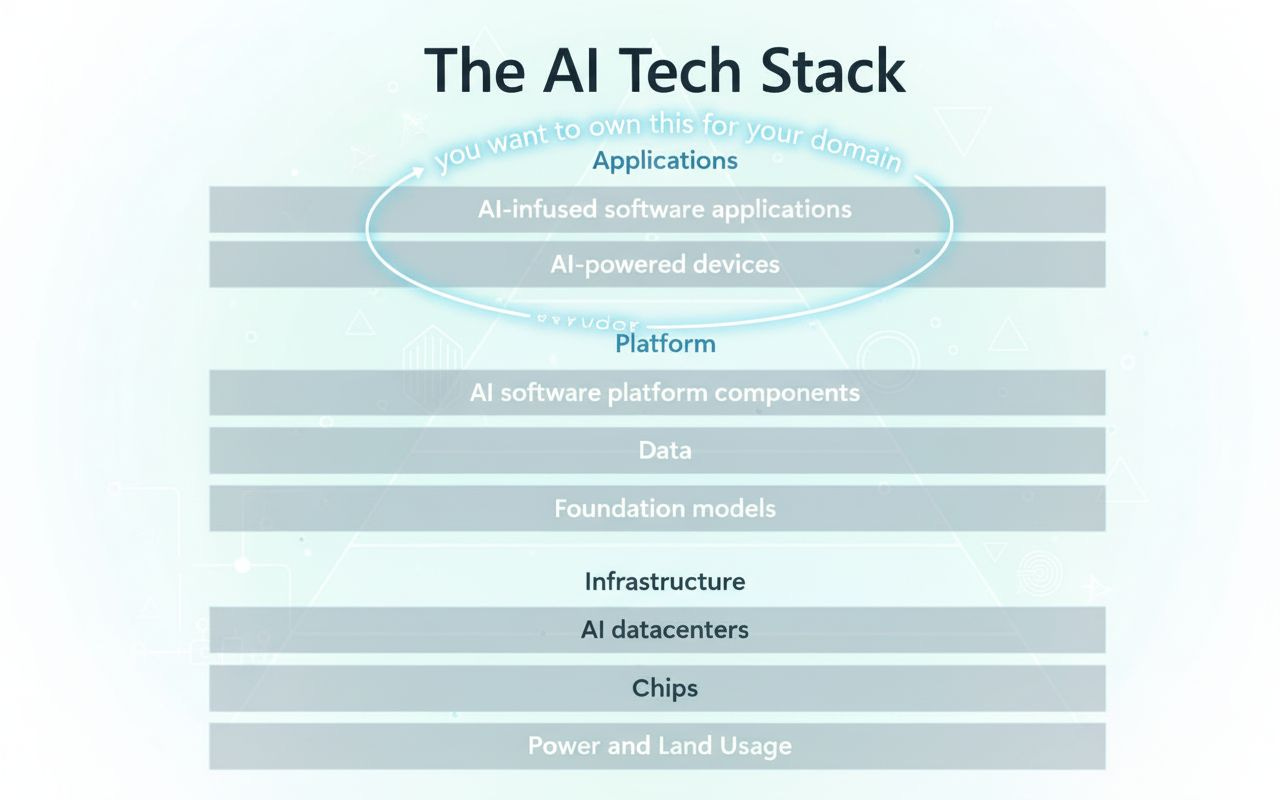

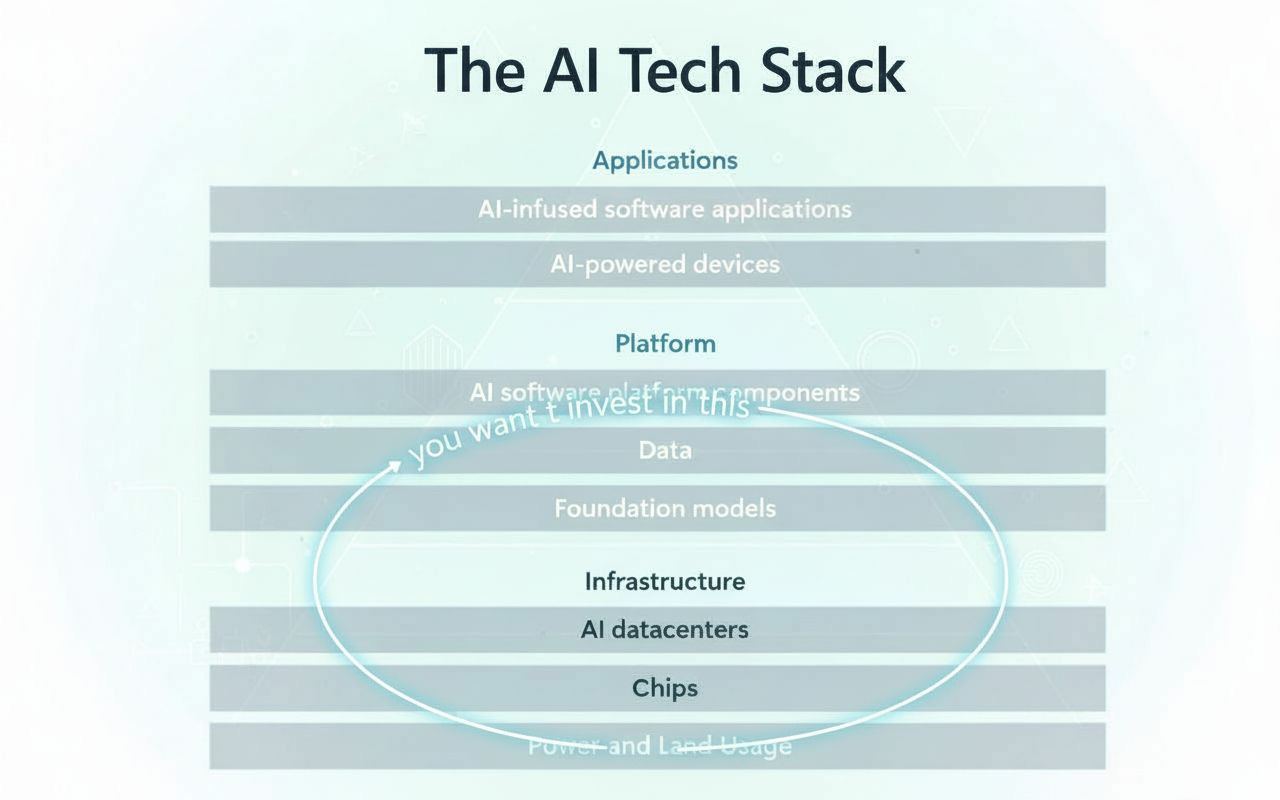

Speaking of distribution, as an entrepreneur you might want to consider where in the AI stack you fit. For example, you probably won’t be making energy, or chips, or even data centers. All these require large amounts of capitol expenditure that are out of reach for the average entrepreneur. However this isn’t necessarily where you want to be, you actually want to be at the top of the stack.

By owning the distribution and the last hop to the consumer, you force your service providers to compete for your business, driving down their costs. We have already seen some of the most aggressive competition in this area with the price per million tokens generated going down over time, unlocking Jevons paradox as more and more uses of these AIs get unlocked over time due to lower production costs. The difference between this and the other booms is that while you may have more shots, so does everyone else, including the downstream providers, so make sure to include standard product features such as network effects to increase the value beyond token consumption.

How can I invest in AI?

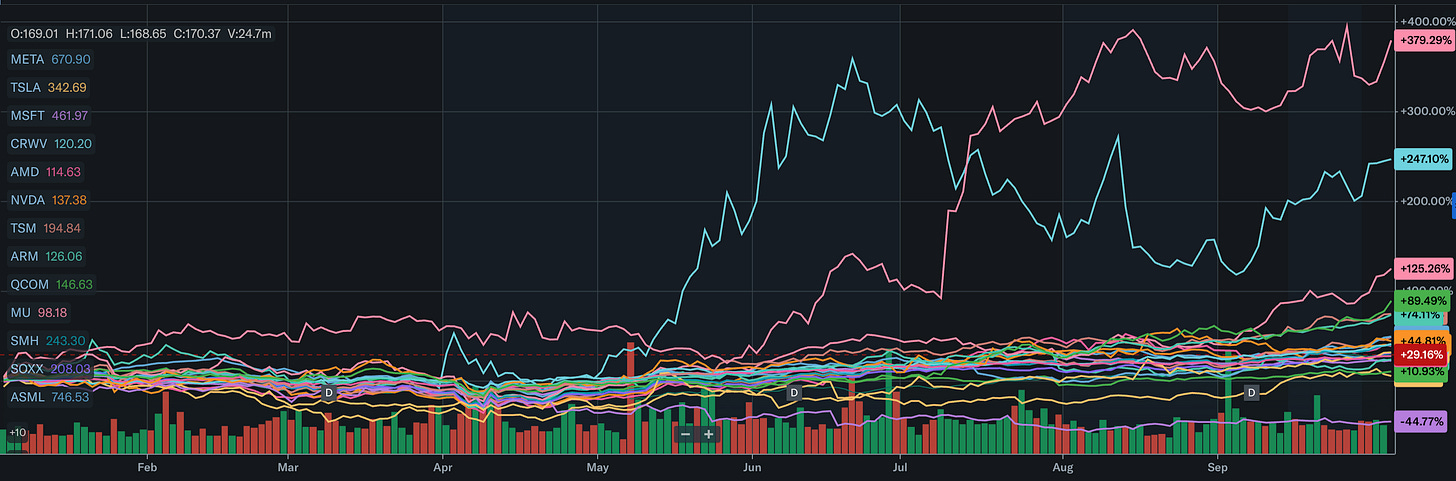

The public markets have indeed seen the potential for AI as a major economic driver and are reacting accordingly. As you go further down the AI stack, you are able to derive more value from your investment due to the direction that capitol will flow through this value chain, and the lower you go the more fungible the good (for example energy can do much more than power chips). In this way you can walk down the supply chain and size your positions accordingly, with the biggest positions at the bottom and the smallest positions at the top.

Some example tickers on these thematic strategies are the following:

Foundation Models: GOOG, META, MSFT

Chips: AMD, NVDA, TSM, ARM, QCOM, MU, SMH, SOXX, ASML, GOOG

Power: FSLR, ENPH, ICLN, GRID, VWDRY, NLR,

You’ll notice that some tickers occupy more of the stack than others, and this is absolutely to their advantage. Google is a great example here, they own the data, the foundation models, the datacenters, and they even build their own chips called the TPU. This gives them a distinct advantage over their competitors, by owning so much of the stack they can reduce their energy costs substantially making their cost of doing business much better than their competitors in this space.

Other companies such as Microsoft have been trying to own their access to energy by bidding on the infamous 3 mile island nuclear power plant.

In terms of private investing, such as in OpenAI and Anthropic, I don’t have a lot of information in that other than I would be scared at some of these numbers. OpenAI is now America’s most valuable company recently beating out SpaceX, this is on the back of fundamentals that report a $4.5B in revenue and net losses of $13.5B and revenue of +16% of all of last years revenue in the first half of 2025. It may seem surprising that a company valued that much is making a loss, but this isn’t unprecedented and is a common growth strategy, Uber for example didn’t make until 2024. So why be scared? Because this is the biggest I’ve ever seen this strategy, the capital expenditures are huge and there is muscular competition. To keep this going will need some very deep capital markets, and I fear if these companies begin to go under they could take the whole sector with them and wipe off a huge part of the economic gains AI has made.

Perhaps they are too big to fail?

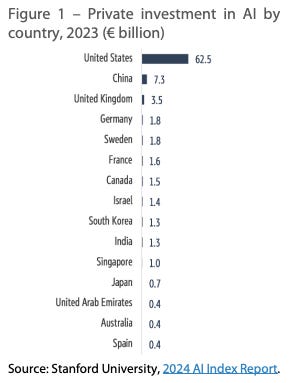

How are states reacting?

Speaking of too big to fail, the US has decided that it wants to grow its way out of its debt spiral, and that in order to do that AI is one of the key sectors it will invest in using state resources. To this effect it has announced programs like the Stargate Project which aims to spend $500B on AI datacentre investments, taken positions in Intel for chip manufacturing and begun deregulating its energy sector. Overall this isn’t a bad economic strategy and one that it almost certainly needs to take to maintain its global position and pay off its debt at the same time. Due to the risk that a collapse in OpenAI or Anthropic represents to this plan, it is highly likely the US government will make sure it doesn’t happen, which should be good news for investors.

The other big player in the AI race is China, who is not so focused on endeavours like AGI or other general intelligences, but in their application in the market. In this way they have been at the forefront of the robotics race as well as being a very formidable player in EV’s and self driving. The great innovations made by DeepSeek shook the confidence in the AI market, however were quickly adapted by the US to serve more insatiable demand.

Europe is unfortunately a distant third, Mistral is indeed a good lab and there is lots of talent coming out of Stockholm, Paris and London, however the capital markets are no where near as deep as the US and balk quite easily at the huge capital expenditures required to support this industry. On top of that you have large energy costs due to net zero aspirations coupled with a lack of Russian gas due to the Ukraine war. As if that wasn’t enough, Europe wasted no time getting to work on the AI Act, another technology regulation on the heels of the Digital Services Act and the General Data Protection Regulation, all of which chills investment in the sector, especially when the US is more than open for business. One thing is for sure, Europe won’t be growing its way out of its own debt crisis using AI growth.

Is there a bubble?

Yes. With any new technology, and not just later software based technologies such as mobile or the web but going back as far as telecommunications, railroads or the advent of electricity there is a large buildout of the technology that eventually contracts as the hype wears off and people start valuing these technologies based on their fundamentals instead of lofty growth aspirations.

This isn’t necessarily a bad thing, we are all surely in the awe of the previous buildouts that happened even if they were indeed bubbles, the only question is how you treat the idea of it being a bubble with your own actions. As long as this area remains strategically important to the US, you can be somewhat secure in your investments, having said that always keep an eye on them to make sure they aren’t going off the track, size accordingly and don’t over-react to short term trends, allow mean reversion to guide you in your long term allocations.

In every bubble, missing out on the way up or the gradual buildout that results is a very poor economic strategy.

Where do we go from here?

There is so much to be excited about with this technology, and I couldn’t be more bullish. People might mistake my bearish take on AGI as being bearish on the technology itself, but I am personally more invested than I have ever been. The impact AI could have goes far beyond just chat bots, and extends into nearly every domain humans have yet to penetrate with simple human based analysis.

Take the amazing work of DeepMind to solve protein folding, or the great work in video creation with Veo-3, or the state of the art weather prediction systems in GraphCast, truth is I don’t know an industry that isn’t going to be impacted positively by this, and everyone I know in their respective industries whether its healthcare, legal or energy is thinking about ways to apply this new technology to its maximum effect.

The technology is so good that I think we can’t afford not to embrace it fully. I believe most of the problems that face us as species today are deeply probabilistic and can’t be solved without the large scale analysis AI provides, this includes space travel, climate change, health science and even eventually governance. So if we want to get out of the problems that ail us today, the only way forward I can see is leaning hard on the technologies of tomorrow to solve them.